Hyper Flow 971991551 Neural Node

The Hyper Flow 971991551 Neural Node is presented as an integrated unit combining sensing, computation, and communication. Its design emphasizes adaptive resource allocation and parallel submodule coordination to sustain low-latency data flow. The account stresses sensing accuracy, processing efficiency, and fault tolerance within scalable deployments. Questions remain about real-world interoperability, governance, and readiness assessment. The discussion will probe metrics and trade-offs to determine practical applicability and strategic deployment pathways.

How Hyper Flow 971991551 Neural Node Works

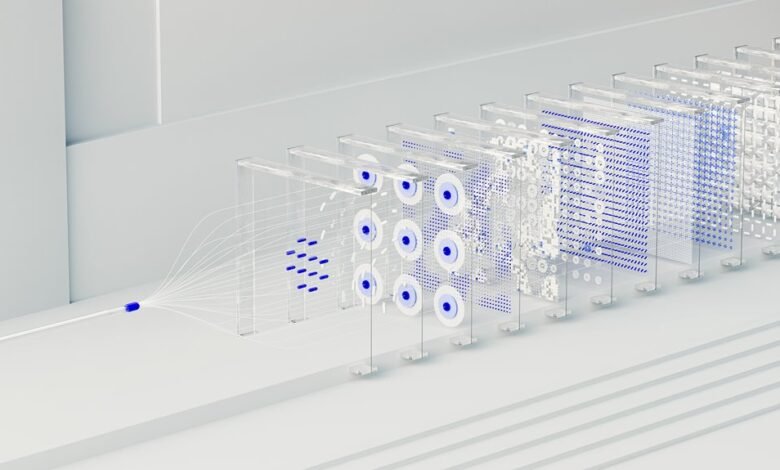

Hyper Flow 971991551 Neural Node operates as a modular processing unit that integrates sensing, computation, and communication within a unified framework. It dissects input streams, allocates resources, and coordinates parallel submodules, ensuring low-latency data flow.

The Neural Node harmonizes sensing accuracy with processing efficiency, enabling adaptive routing, fault-tolerant operation, and scalable deployment. Hyper Flow demonstrates systematic integration and controlled flexibility.

Why It Excels: Core Capabilities and Benefits

The core capabilities of the Hyper Flow 971991551 Neural Node derive from its integrated sensing, computation, and communication subsystems, which together enable precise data acquisition, efficient processing, and reliable inter-module coordination. The analysis assesses how it scales, reliability metrics, innovation throughput, and security posture, revealing systematic advantages, measurable resilience, streamlined deployment, and disciplined risk management across modular configurations and evolving workloads.

Real-World Use Cases Across Domains

Analytical evaluation reveals neural scalability across heterogeneous data sources and operational contexts, while data interoperability enables seamless cross-domain collaboration.

The result is rigorous adaptability, efficient task execution, and consistent reliability, regardless of domain-specific constraints or deployment scale.

Evaluating Deployment Readiness: Metrics, Trade-offs, and Next Steps

Evaluating deployment readiness requires a structured assessment of metrics, trade-offs, and actionable next steps to ensure system viability across contexts. The analysis identifies insight gaps and performs a rigorous risk assessment, balancing performance, cost, and governance. A disciplined framework:

- defines success criteria

- benchmarks readiness

- outlines remediation and rollout phases

Results enable informed decisions without overcommitment.

Conclusion

The Hyper Flow 971991551 Neural Node demonstrates a disciplined integration of sensing, computation, and communication to support adaptive routing, fault tolerance, and scalable deployment. Its modular orchestration enables resource-aware data flow and parallel submodule coordination, aligning with governance and risk-management goals. Conceptually, it operates like a well-tunneled network of rivers feeding a precise dam. This rigorously engineered approach offers measurable readiness, though trade-offs in latency versus throughput and deployment complexity require careful, data-driven evaluation before phased rollout.